Pardon our appearance

We are actively working to enhance your experience by translating more content. However, please be aware that the page you are about to visit has not yet been translated.

We appreciate your undertanding and patience as we continue to imporove our services.

Boards are increasingly being asked to oversee artificial intelligence as both a strategic capability and a source of enterprise risk. In many organizations, that oversight is already complicated by a quieter reality: AI is being used well beyond the boundaries of approved tools, formal governance frameworks, and security review.

In practice, in many organizations unapproved AI use is already embedded in everyday enterprise workflows, including at some senior levels, often without visibility from IT, legal, or risk functions. For directors, general counsel, and governance professionals, the implications are distinct. The information handled at board level is more sensitive, more material, and more consequential when it intersects with ungoverned technology.

Understanding where this exposure lives, why it persists, and how boards can respond is quickly becoming part of modern governance.

Where Shadow AI Creates Board‑Level Exposure

Unapproved AI use frequently arises from efficiency‑seeking behavior rather than intent. Directors and executives may summarize materials, draft communications, or analyze documents using tools that sit outside approved enterprise environments. The activity itself often feels benign.

What changes at board level is the risk concentration.

Directors routinely engage with nonpublic financial information, M&A diligence materials, succession plans, draft disclosures, regulatory correspondence, and sensitive investor communications. When that information is introduced into unvetted AI systems, it may create governance, confidentiality, and data protection considerations that are difficult to fully remediate.

Independent research illustrates how widespread this behavior has become. Menlo Security’s 2025 report found that 68% of employees use free‑tier generative AI tools via personal accounts, and 57% report inputting sensitive data into those tools. Similar findings have been cited by IBM and others examining the cost implications of ungoverned AI use.

At board level, the same behaviors can carry potentially outsized impact because the information involved is often material.

Governance‑specific data reinforces the concern. A 2025 survey reported by Corporate Compliance Insights found that 69% of board professionals already use generative AI for governance‑related work, while Nasdaq’s recent Governance Pulse report found that only 8% of directors rely on formally approved, company‑provided AI tools. The gap between adoption and governance is where exposure tends to accumulate.

That gap is compounded by uneven institutional readiness. Multiple global director surveys indicate that while AI risk is increasingly discussed, many boards still lack structured ownership, committee accountability, or clear policies governing director‑level AI use.

Why the Stakes Are Higher in the Boardroom Than Elsewhere

Shadow AI is not unique to boards, but no other function concentrates fiduciary, regulatory, and reputational sensitivity in the same way. Even a single document inadvertently processed through an unsecured AI platform can introduce risk that is challenging to contain.

Based on IBM’s Cost of a Data Breach Report 2025 breaches involving ungoverned or poorly governed AI tools tend to take longer to detect and cost significantly more to remediate than incidents in environments with mature governance controls. While these figures capture direct financial impact, they do not account for reputational damage, market reaction, or regulatory scrutiny that may follow disclosure of board‑level information.

Related research from Proofpoint and other security providers suggests that AI‑related data exposure often involves personally identifiable information or proprietary corporate materials. From a governance perspective, these scenarios translate directly into oversight and accountability considerations.

There is a structural irony at play. Boards are increasingly tasked with overseeing AI risk across the enterprise while often being among the least governed AI users themselves. Research from EY’s Center for Board Matters shows that nearly half of Fortune 100 companies now cite AI risk as part of board oversight—yet many still lack consistent governance mechanisms to manage AI use in practice.

When “Vibe-Coding” Custom AI Tools Become a Governance Risk

As generative AI capabilities have become more accessible, an increasing number of in-house teams are considering building or experimenting with custom AI‑enabled tools (i.e. vibe-coding) for both general and board use. “Vibe‑coding” is industry shorthand for an AI‑first development approach where software is created primarily through natural‑language prompting, allowing teams to iterate rapidly at the level of intent rather than hand‑coding implementation details. It’s often used for fast prototyping and experimentation, but typically requires additional review, testing, and governance before being suitable for enterprise‑grade production use. In some cases, these efforts demonstrate real functional promise. For example, companies might aspire to build their own tools for rapid rapid summarization, document analysis, or workflow automation that can feel well suited to board needs.

The governance challenge is that building a working solution is not the same as deploying a system that can be trusted at board level without appropriate governance controls.

At that level, expectations shift. Security architecture, auditability, access controls, data handling practices, continuity, and accountability matter as much as functionality. Informal or bespoke tools, however clever, can be difficult to maintain, document, and defend under regulatory, litigation, or shareholder scrutiny. What works in a pilot or internal experiment may not hold up when governance standards, incidents, or external review are introduced.

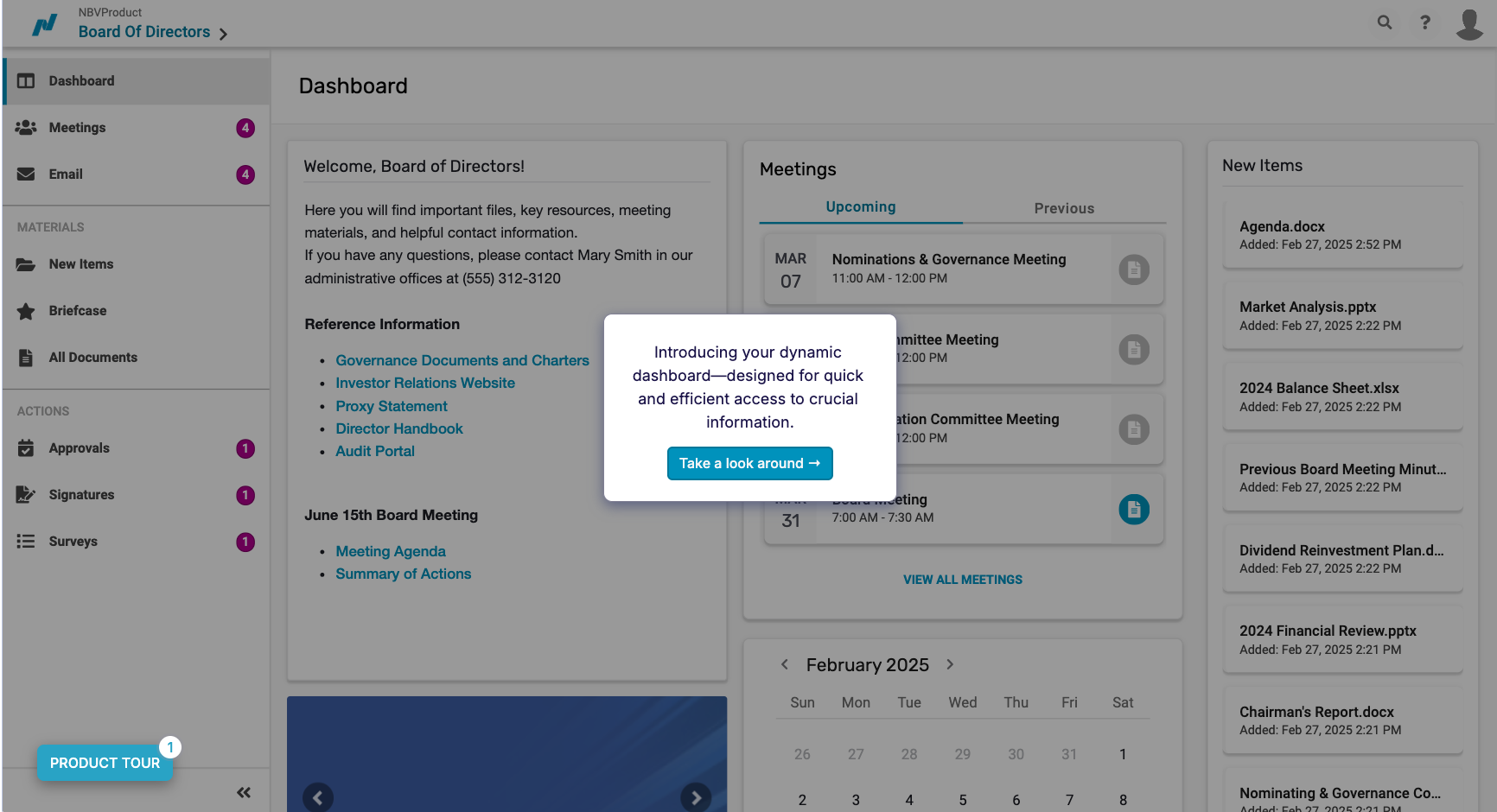

Purpose‑built board governance platforms like Nasdaq Boardvantage are designed to address these constraints. Their value extends beyond individual features to include layered security, detailed audit trails, role‑based access controls, and operational resilience designed specifically for director workflows. These platforms are built to operate predictably under scrutiny, not simply to perform well in day‑to‑day use.

For directors serving on multiple boards, consistency and reliability are not preferences, they are necessities. Governance teams likewise need platforms that align with enterprise security standards and can withstand examination from regulators, auditors, and counsel. From an oversight perspective, custom solutions may introduce additional governance risks unless they meet the same standards applied to other critical board infrastructure.

This is why many governance teams look to purpose‑built platforms that have been designed with enterprise security, auditability, and board‑level risk requirements in mind. Notably, Nasdaq has outlined how it approached introducing AI into board governance workflows through a secure, governed collaboration with Microsoft, illustrating how AI can be adopted in the boardroom without compromising trust, control, or accountability.

Is “AI‑Powered” Creating Confusion in Vendor Evaluation?

“AI‑powered” has become a near‑universal marketing claim across enterprise software. In governance contexts, the challenge is not whether AI capabilities exist, but whether they are appropriate for sensitive board materials.

When evaluating AI‑enabled governance tools, boards and governance teams commonly consider several factors:

- Domain relevance

AI features intended for board use should reflect governance‑specific contexts. Generic models applied to board materials may be susceptible to producing outputs that are incomplete or misleading, creating downstream risk. - Operational maturity

Early‑stage AI features may be acceptable in lower‑risk environments but may be inappropriate for workflows involving material nonpublic information. Boards often ask how capabilities have been tested, monitored, and updated over time. - Data architecture and controls

The reliability of AI outputs depends on how underlying data is structured, secured, and governed. Strong interfaces cannot compensate for weak data foundations.

These considerations are less about innovation and more about institutional trust.

What Is the Board’s Role in AI Governance?

The board’s responsibility generally operates on two levels: governing the organization’s overall AI posture and modeling the governance behaviors it expects from management.

On the oversight front, progress has accelerated but remains uneven. Surveys from the National Association of Corporate Directors and EY show that while AI now appears more frequently on board agendas, fewer boards have formally embedded AI governance into committee charters or accountability structures. Awareness has increased faster than operational ownership.

The business case for board‑level AI fluency is increasingly well documented. Research from MIT’s Center for Information Systems Research has found that organizations with digitally and AI‑savvy boards tend to outperform peers on key financial metrics, while those without such fluency underperform relative to their industries.

The second level is credibility. A board that expects disciplined AI governance from management while operating outside approved AI environments may weaken its authority and introduce cultural inconsistency.

In practice, director education is a pragmatic starting point. Effective learning tends to occur through hands‑on engagement with vetted tools inside governed environments, rather than one‑off briefings. Governance teams also play a critical role in setting expectations. When leadership uses approved systems and follows established guardrails, adoption tends to follow.

Boards may also benefit from periodically reassessing their governance technology stack. Platforms selected even a few years ago may differ materially from today’s offerings in both capability and security posture. As investment in AI‑native enterprise applications accelerates, governance solutions should evolve alongside broader enterprise standards.

Where AI Governance Becomes Real

Shadow AI is not a future concern. It is a present governance consideration, and the boardroom is where its consequences are often most concentrated.

The gap between the AI tools directors are using and the tools organizations have approved is real, measurable, and solvable if it is treated as an oversight issue rather than a technical footnote.

Boards that navigate this well tend to view AI governance as a lived practice rather than a policy exercise. Providing secure, purpose‑built tools, setting clear expectations for their use, and normalizing governed AI adoption at the top of the organization are increasingly viewed as markers of effective oversight.

In an AI‑enabled enterprise, effective governance often begins in the boardroom.

Learn how Nasdaq Boardvantage® supports governed board workflows designed with robust security, including AI‑enabled capabilities designed for sensitive governance environments. Explore a self‑guided tour of the Nasdaq Boardvantage board portal.

Discover the latest boardroom trends in our 3rd Annual Nasdaq Governance Pulse Report here.